When you give an AI agent shell access, file system permissions, email credentials, and persistent memory, you have basically built a data exfiltration pipeline that runs 24/7 without human oversight. The ClawdBot incident made that clear: plaintext credential storage, exposed control panels, and over 135,000 instances reachable from the public internet.

OpenClaw is just one example. Every major platform is now shipping agentic ai capabilities, from Microsoft’s Copilot agents to Google’s Gemini integrations to the dozens of open-source frameworks appearing on GitHub every week. Integration protocols like MCP are connecting these agents to real-world actions across dozens of services simultaneously.

The pattern is the same everywhere: broad permissions, locally stored credentials, untrusted inputs from messaging platforms and web content, and persistent memory across sessions. For security teams, this is an entirely new class of endpoint risk that traditional defenses were not designed to handle.

Why Agentic AI Changes The Threat Model

The difference between a chatbot and an agentic AI system comes down to authentication and execution. A chatbot takes a prompt, processes it against an LLM, and returns a text response. Unless RAG is enabled, it has no access to the file system, no stored credentials, and no ability to take actions outside of the conversation window.

An agentic AI system operates on the host machine itself. It authenticates to services via OAuth tokens, reads and writes to the local file system, executes shell commands with the user’s privileges, and maintains persistent connections to messaging platforms and SaaS tools. From an operating system perspective, the agent is indistinguishable from the user.

That matters because EDR, DLP, and network monitoring were all built to detect threats that look different from normal user activity. An AI agent making authenticated API calls to Slack, uploading files to Google Drive, and sending emails through OAuth triggers none of those detections. There are no IOCs to match against and no suspicious binaries to sandbox.

Persistent memory makes the exposure worse. Traditional infostealers grab what they can from browser storage or a credential manager during a single session. An agentic AI accumulates credentials, conversation history, project details, and communication patterns across weeks of continuous operation, all stored in plaintext on the local disk. If an attacker compromises the agent through prompt injection, or a malicious skill for example, the volume of data available for exfiltration is orders of magnitude greater.

The Attack Surface Is Already Being Exploited

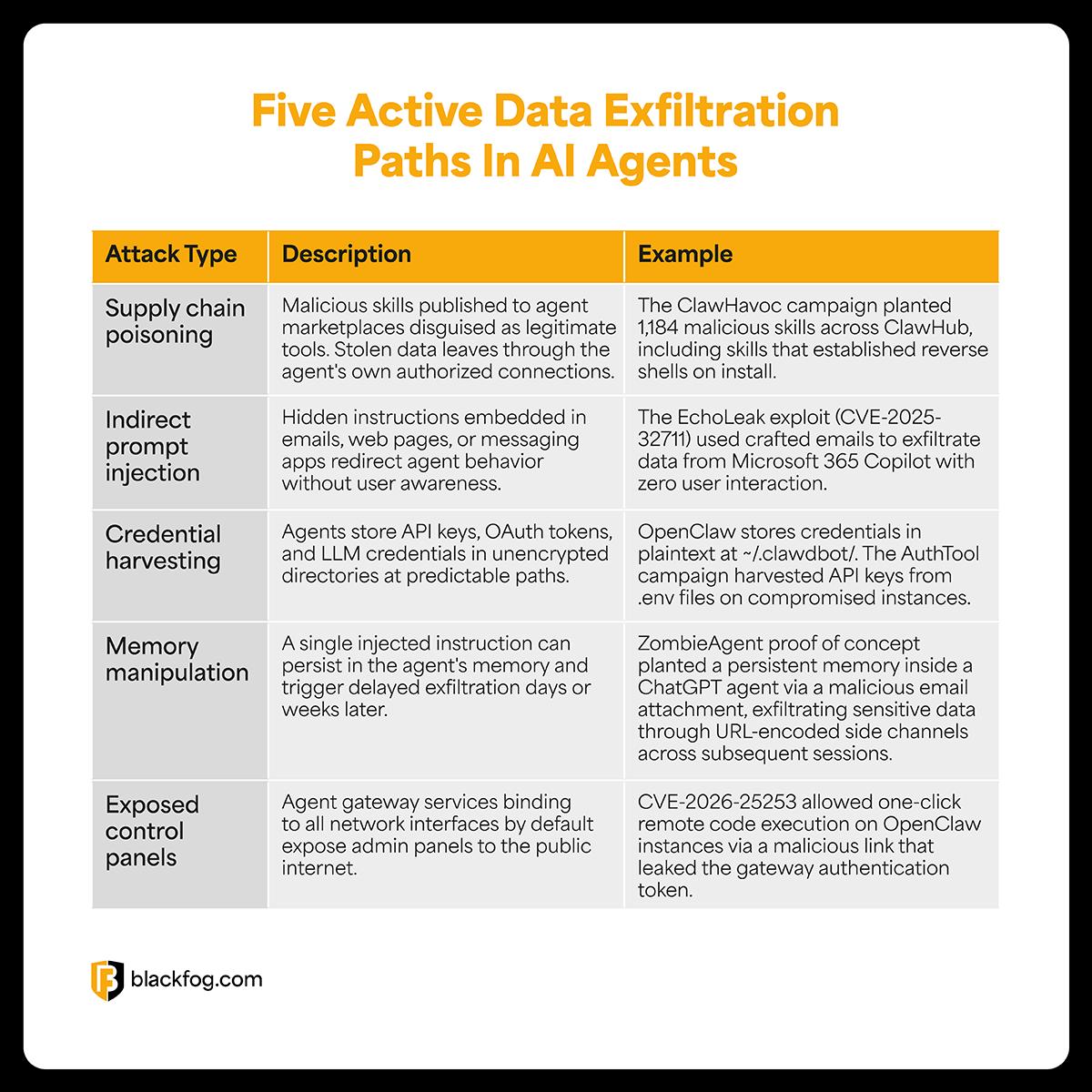

The attack surface for agentic AI is already being exploited in the wild, and the patterns are consistent across every agent framework shipping today. The combination of broad permissions, unvetted third-party extensions, persistent memory, and plaintext credential storage creates five distinct paths to data exfiltration.

These attack types also compound quickly. A malicious skill can inject instructions into persistent memory, which then influence how the agent processes future emails, which in turn opens new exfiltration channels through legitimate services. The LLM itself becomes the execution engine for multi-stage attacks that unfold over days or weeks, with each step looking like normal agent activity.

The Enterprise Exposure Is Growing

Employees are already installing agentic tools on corporate devices without IT approval, connecting them to work email, Slack, GitHub, Jira, and cloud storage with broad OAuth scopes. With 96% of ransomware attacks now involving data exfiltration, any new unmanaged channel for data movement is a direct escalation of risk.

Just a few weeks ago, Gartner warned that autonomous AI agents create “insecure by default” risks, and characterized OpenClaw as “a dangerous preview” of what happens when agents operate without governance. China restricted state agencies from running OpenClaw on office computers, and Microsoft’s Defender team published guidance recommending that OpenClaw should only be used in isolated environments with no access to production credentials.

When employees started pasting sensitive data into ChatGPT, they were making an active decision to share information with an external service. With agentic AI, the employee installs a tool and connects it to their work accounts, and from that point on, the agent accumulates sensitive data automatically, stores it in plaintext, and exposes it through every connected service. The employee doesn’t need to do anything wrong for the data to be at risk.

The Case For Anti Data Exfiltration

Traditional security tools focus on keeping attackers out or identifying malicious code after it executes. Against agentic AI, neither approach works. The agent is already inside the network, running with legitimate credentials, and the traffic it generates looks identical to normal user activity. There are no malicious binaries to flag and no signatures to match against.

Anti data exfiltration (ADX) starts from a different assumption: attackers will find a way into the network, so the priority is making sure they cannot get data out. ADX uses behavioral analytics to monitor outbound data movement from the endpoint, identifying when data is being transmitted to unauthorized destinations or when applications are communicating with command-and-control infrastructure. If the data should not be leaving, ADX blocks the transfer in real-time, regardless of which application initiated it.

For Shadow AI specifically, ADX Vision extends this to unapproved AI tools. Operating directly on the device, it monitors data flowing into LLMs, detects when employees interact with unsanctioned agents, and prevents sensitive information from reaching those tools. Security teams gain visibility into which AI agents are running across the organization and can enforce governance policies automatically without disrupting productivity.

Simply infiltrating a network does not make a cyberattack successful. The attack is only successful if sensitive data is stolen, and without data exfiltration there is no breach. That principle applies whether the exfiltration source is malware, a compromised AI agent, or a legitimate tool being abused. Contact BlackFog to learn how anti data exfiltration can protect your organization as agentic AI adoption accelerates.

Share This Story, Choose Your Platform!

Related Posts

The Canvas Ransomware Attack: How ShinyHunters Exposed a Global Education Security Crisis

ShinyHunters’ Canvas ransomware attack exposed millions of student records, highlighting growing risks of data exfiltration in education.

Free 14-Day AI Discovery & Data Exposure Assessment

BlackFog's state of ransomware May 2026 measures publicly disclosed and non-disclosed attacks globally.

The State of Ransomware: May 2026

BlackFog's state of ransomware May 2026 measures publicly disclosed and non-disclosed attacks globally.

BlackFog Honored with 2026 MSP Today Product of the Year Award

BlackFog’s ADX Vision won the 2026 MSP Today Product of the Year Award for helping MSPs detect Shadow AI risks and protect data.

Snowflake Data Breach Explained: Timeline, Impact, and Key Lessons

The 2024 Snowflake data breach exposed 165+ organizations through stolen credentials and absent MFA. Here’s the timeline, impact, and key lessons for cloud security.

RAG Poisoning: How Hidden Prompts Steal Corporate Data

RAG poisoning lets attackers hijack AI assistants like Copilot to exfiltrate corporate data. Here is how the attack works and how to defend against it.