What happens when attackers use your approved AI tools as a data exfiltration channel? New research reveals how the LotAI technique turns Copilot and Grok into covert C2 relays.

Most organizations have spent the last two years rolling out AI assistants across their workforce. Microsoft Copilot, xAI’s Grok, and similar tools are now embedded in browsers, collaboration suites, and developer environments.

Security teams have largely focused on prompt injection as the primary AI risk, but new research reveals a different kind of threat, one where AI assistants aren’t the target of an attack but the transport layer for it.

In February 2026, security researchers demonstrated that AI assistants with web browsing capabilities could be manipulated into acting as covert command-and-control (C2) relays.

The underlying technique allows malware to send data to an attacker-controlled server and receive instructions back, all by routing traffic through an AI assistant the organization already trusts.

What Is Living off the AI (LotAI)?

Security teams are familiar with the concept of “Living off the Land” (LotL) techniques, where attackers use legitimate system tools like PowerShell or CertUtil to carry out malicious actions without dropping custom binaries. This works because defenders are unlikely to block tools the organization relies on to function properly.

LotAI applies the same logic to enterprise AI services. Instead of abusing built-in OS utilities, the attacker abuses AI assistants that the organization has explicitly approved. Traffic to copilot.microsoft.com or grok.com is expected, permitted, and rarely inspected with the same scrutiny as traffic to unknown domains. By routing C2 communications through these services, an attacker can blend malicious traffic into thousands of legitimate AI queries happening every day.

What makes LotAI particularly effective is that it doesn’t require API keys or authentication. Both Copilot and Grok allow anonymous web access with URL fetching capabilities, which eliminates the traditional kill switch defenders rely on. You can’t revoke an API key that was never issued.

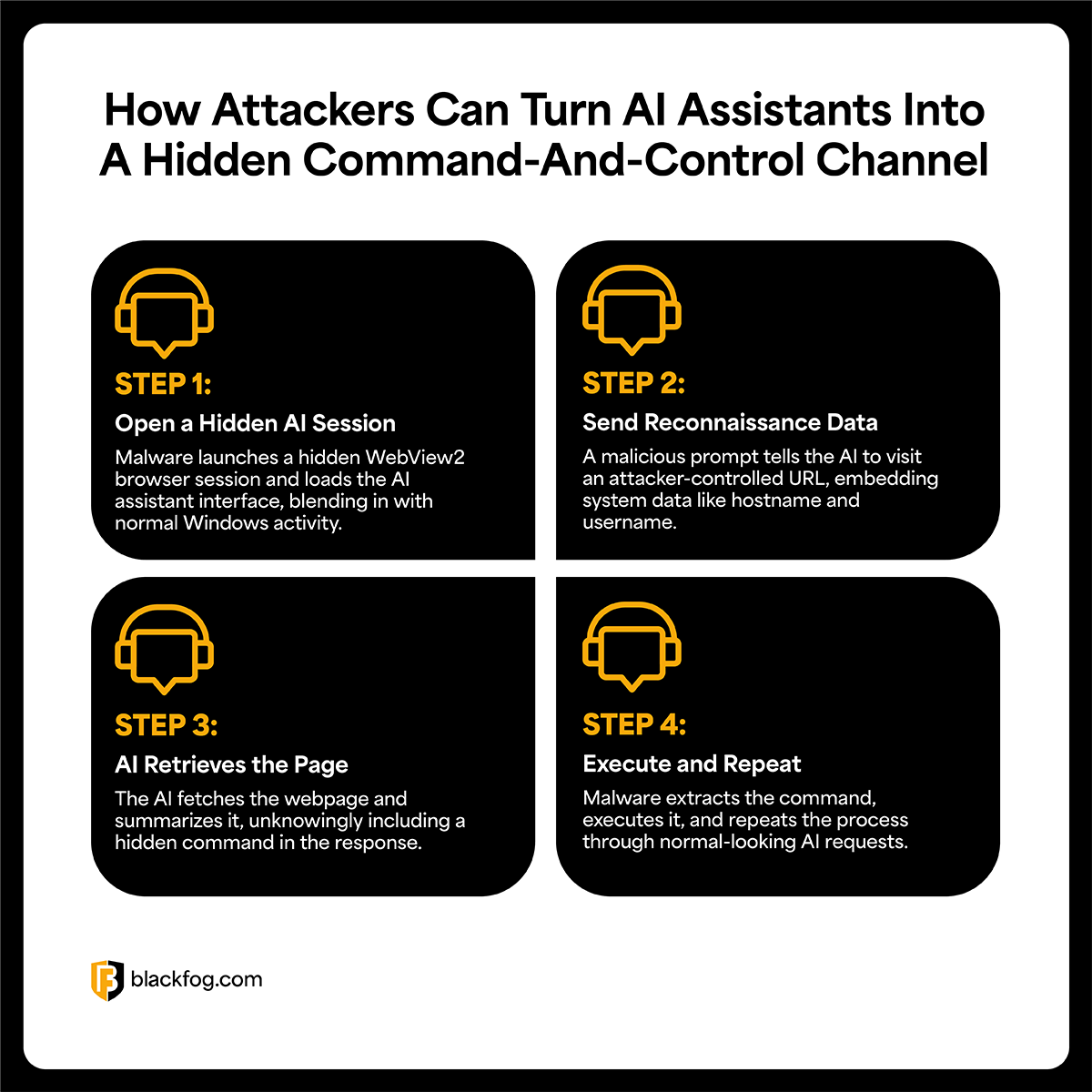

How the Attack Works

In this scenario, the attack assumes the attacker has already compromised a machine through phishing, a supply chain compromise, or exploitation of a vulnerability. The AI assistant isn’t how they got in, it’s how they stay connected once they’re inside.

The core concept is simple – malware needs a way to communicate with the attacker without looking suspicious. AI assistants that can fetch URLs provide exactly that channel. Data goes out in the URL request, commands come back in the AI’s response, and to any security tool watching the network it just looks like normal AI usage.

The proof of concept here used Copilot and Grok, but the technique itself could work with any AI assistant that has URL fetching capabilities.

Step 1 – Open a Hidden Session

The malware opens a hidden WebView2 session and navigates to the AI assistant’s web interface. WebView2 is an embedded browser component preinstalled on Windows. Because it’s a legitimate Microsoft component, endpoint detection tools are unlikely to flag it.

Step 2 – Inject a Malicious Prompt

With the session open, the malware injects a prompt instructing the AI to visit an attacker-controlled URL. Reconnaissance data from the infected machine gets appended as query parameters, things like hostname, username, and installed software. From the AI’s perspective, this is just a normal request to summarize a webpage.

Step 3 – Fetch and Return Content

The AI fetches the attacker’s page and returns the content. In the proof of concept, the page was a comparison table of Siamese cat breeds, but one column, only visible when specific URL parameters were present, contained a Windows command. The AI summarized the page as instructed and included the command in its response without flagging anything suspicious.

Step 4 – Parse and Execute Command

The malware parses the AI’s output, extracts the command, and executes it. Then the cycle repeats, with new reconnaissance data going out in the next URL request and new instructions coming back in the next response. Each loop looks like a normal AI interaction, making it very difficult to pick out from legitimate traffic.

Why Traditional Detection Misses It

This technique works because it exploits the trust assumptions most security architectures are built on. As mentioned previously, domains like copilot.microsoft.com are allowlisted and often exempt from deep packet inspection. Security teams treat AI domains as part of the trusted ecosystem, not as potential egress points for stolen data.

Traditional C2 detection relies on identifying traffic to known malicious infrastructure or anomalous connection patterns, and LotAI sidesteps all of it. The destination is a legitimate, high-reputation domain. The protocol is standard HTTPS and the traffic pattern matches normal AI usage. Even behavioral analytics tools will struggle here, because at the network level, malware using Copilot to fetch C2 instructions looks identical to an employee asking Copilot to summarize a webpage.

From C2 Proxy to AI Data Exfiltration

The C2 relay technique is problematic on its own, but the research points to something broader. Once an attacker has a reliable communication channel through an AI assistant, the same interface can turn the AI into a decision engine for the malware itself.

Security researchers describe this as AI driven (AID) malware. Instead of following hardcoded logic, the malware sends context from the compromised host to an AI model to decide what to do next. The AI can help triage which files are worth exfiltrating and how aggressively to operate without triggering detection.

For data exfiltration specifically, this changes the math considerably.

Traditional ransomware operations tend to be noisy, exfiltrating large volumes and generating traffic that detection systems are designed to catch. But if an AI model can score which files are actually valuable, the attacker targets a small, high-value subset instead (thus making traditional detection less effective).

What This Means for Enterprise Security

The main problem here isn’t a vulnerability in Copilot or Grok themselves, it’s how organizations treat AI traffic. When enterprises allow unrestricted outbound access to public AI services without inspection or logging, they create the exact kind of blind spot attackers look for.

Security researchers were the first to document this technique, but we should expect to see it adopted more broadly. AI assistants are becoming standard enterprise infrastructure, web browsing capabilities are being added to more models, and organizations are allowlisting AI traffic by default.

The conditions that make LotAI effective are only becoming more common.

Traditional detection-based approaches will always struggle with LotAI because the traffic is designed to look legitimate, so focusing on the data itself is more effective. If unauthorized data can’t leave the device, it doesn’t matter whether the attacker routes it through a C2 server, a cloud API, or an AI assistant – it won’t make a difference.

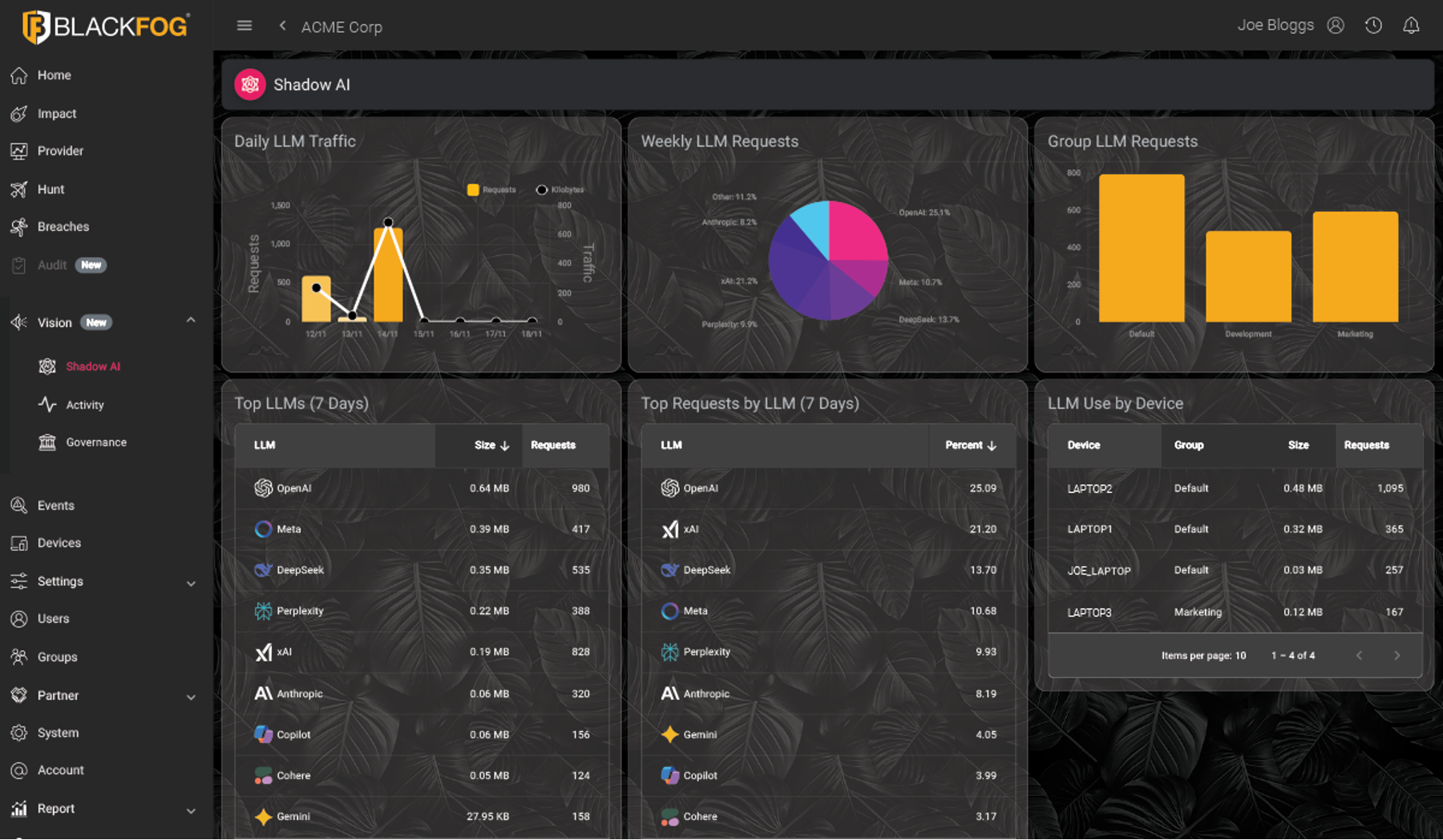

This is exactly the kind of threat ADX Vision was built for. Operating directly on the endpoint, ADX Vision gives security teams real-time visibility into how data interacts with AI tools, detecting shadow AI activity and preventing unauthorized data movement before it leaves the organization.

As LotAI and AI-based exfiltration channels become more common, organizations need on-device protection that can control what data flows to AI services in real time. ADX Vision provides this protection by monitoring and controlling data transfers to AI tools, regardless of whether they’re sanctioned applications or unauthorized services operating in the background. This approach ensures sensitive data stays protected, regardless of how attackers try to exfiltrate it.

Learn more here: ADX Vision.

Share This Story, Choose Your Platform!

Related Posts

LotAI: How Attackers Weaponize AI Assistants for Data Exfiltration

What happens when attackers use your approved AI tools as a data exfiltration channel? New research reveals how the LotAI technique turns Copilot and Grok into covert C2 relays.

The State of Ransomware: February 2026

BlackFog's state of ransomware February 2026 measures publicly disclosed and non-disclosed attacks globally.

Steaelite RAT Enables Double Extortion Attacks from a Single Panel

Steaelite is a newly emerging RAT that unifies credential theft, data exfiltration, and ransomware in a single web panel, accelerating double extortion attacks.

ClawdBot and OpenClaw: When Local AI Becomes A Data Exfiltration Goldmine

ClawdBot stores API keys, chat histories, and user memories in plaintext files, and infostealers like RedLine, Lumma, and Vidar are already targeting it.

West Harlem Group Assistance Stops Ransomware and Cryptojacking with BlackFog ADX

West Harlem Group Assistance secures its community mission by preventing ransomware and cryptojacking with BlackFog ADX.

Why Traditional Security Fails To Deal With Advanced Persistent Threats

Learn why advanced persistent threats remain a growing cybersecurity risk in 2026 and where organizations must focus to address them.