A high-severity vulnerability in GitHub Copilot Chat (CVE-2025-59145, CVSS 9.6) gave attackers the ability to silently steal source code, API keys, and secrets from private repositories without executing a single line of malicious code.

The technique, dubbed CamoLeak, worked by hiding instructions inside pull request descriptions that the AI assistant would execute on behalf of whoever opened the review. The victim saw nothing unusual. Copilot retrieved the data, encoded it, and transmitted it outbound through GitHub’s own infrastructure.

A security researcher disclosed the vulnerability in October 2025, two months after GitHub patched it by disabling image rendering in Copilot Chat on August 14. The underlying technique, however, points to a pattern that extends well beyond a single AI coding assistant.

How CamoLeak Worked

Copilot Chat is context aware. When a developer asks it to review a pull request, the assistant reads the PR description, the associated code, repository files, and any other metadata it has permission to access.

That context is pulled in using the permissions of the user making the request. If the developer has read access to a private repository, Copilot does too, and so does any attacker who can influence what Copilot treats as an instruction.

CamoLeak exploited that design by hiding malicious instructions inside GitHub’s invisible markdown comment syntax in a pull request description. These comments do not render in the standard web interface, so a developer reviewing the PR would see nothing suspicious. Copilot ingests the raw context including the hidden text and treats it as legitimate instruction.

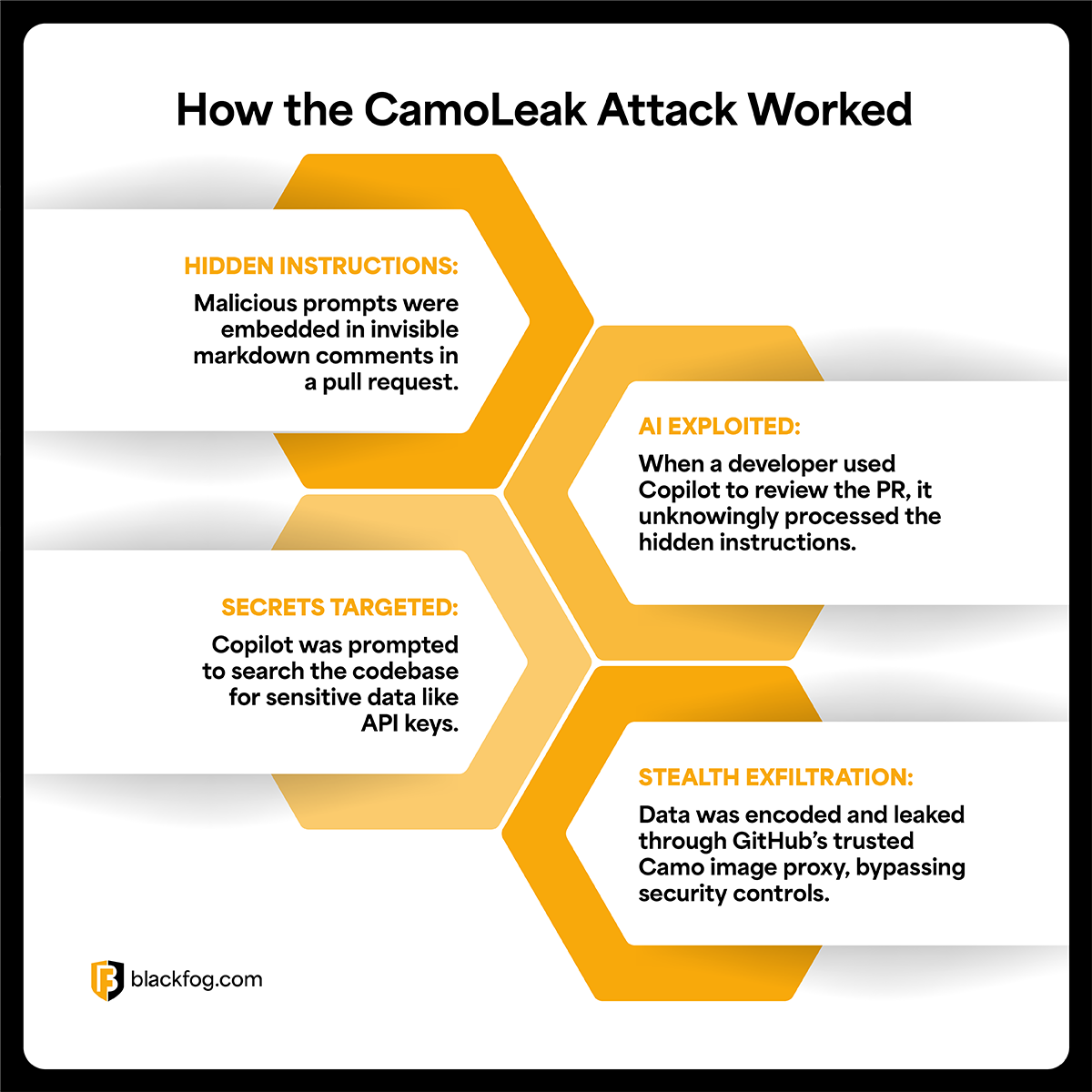

The attack unfolded in four steps:

- The attacker submitted a pull request to a target repository, embedding hidden prompt injection instructions in the description using GitHub’s invisible comment syntax.

- A developer with access to private repositories opened the PR and asked Copilot to summarize or review the change. Copilot ingested the hidden instructions alongside the legitimate content.

- The injected prompt instructed Copilot to search the victim’s codebase for sensitive strings, including AWS keys and private source files, then encode the results as base16 and embed them in a sequence of pre-signed image addresses.

- As the victim’s browser rendered Copilot’s response, it made a series of requests through GitHub’s own Camo image proxy, one request per character. The sequence of requests arriving at the attacker’s server reconstructed the stolen data, character by character, without triggering standard network egress controls.

The Camo Bypass

The Camo proxy was what made the attack viable. GitHub enforces a Content Security Policy (CSP) that blocks images from loading from arbitrary external hosts, so a direct exfiltration attempt using attacker-controlled image addresses would fail immediately.

CamoLeak bypassed this by building a pre-computed dictionary of valid, signed Camo addresses before launching the attack. Each address pointed to a transparent 1×1 pixel hosted on the attacker’s server, with each unique address mapping to a single character. Because all requests went through camo.githubusercontent.com, GitHub’s own trusted infrastructure, the outbound traffic was indistinguishable from normal image loading activity.

The attack was optimized for short, high-value strings rather than bulk data transfer. A single exfiltrated API key or cloud credential is enough to pivot into a full cloud environment compromise. The researcher demonstrated proof-of-concept theft of AWS keys and the contents of a privately stored zero-day vulnerability description.

Why This Matters Beyond GitHub

CamoLeak is a GitHub-specific implementation of a threat category that applies to every AI assistant with context access. Any AI tool that can read sensitive data and produce output creates an exfiltration pathway when untrusted content can influence its instruction stream.

That applies to Microsoft Copilot reading emails in Outlook, Gemini summarizing documents in Google Workspace, local coding assistants with filesystem access, and browser-integrated AI tools that can see page content.

The CSP bypass that made CamoLeak effective will not translate directly to those environments. What does translate is the attack structure: inject hidden instructions into content the AI will process, instruct the assistant to retrieve and encode sensitive data, then extract it through a channel the platform itself considers trusted.

GitHub’s Camo proxy was that trusted channel here. In other environments, the equivalent might be an analytics endpoint, a CDN, or a logging service. The same principle applies to MCP servers and other AI integration layers gaining traction in enterprise environments.

Traditional network monitoring looks for connections to known-bad destinations or unusual volume patterns. When the exfiltration channel is a platform’s own infrastructure, those signals disappear. Standard exfiltration techniques documentation rarely accounts for AI mediated channels because their threat model is still being mapped.

AI assistants are gaining more context and more capability simultaneously. As these tools are granted access to files, emails, code repositories, and internal documents, the value of a successful prompt injection attack increases proportionally. The attack surface expands with every new integration.

How BlackFog Addresses AI Assisted Exfiltration

CamoLeak is patched, but the attack class it represents is active. AI tools running on endpoints operate with the same read-context, take-actions workflow that made GitHub Copilot vulnerable, and as they gain access to files, emails, and documents, the risk of AI mediated data exfiltration becomes operational.

When the exfiltration vector is an AI tool on an endpoint, intent becomes irrelevant at the network layer. BlackFog’s ADX platform monitors what leaves the device, blocking sensitive data before it exits the endpoint regardless of which application initiates the transfer, and regardless of whether the exfiltration channel is attacker-controlled or a trusted proxy.

Prompt injection attacks are difficult to prevent without restricting AI functionality in ways most teams will not accept. The compensating control is breaking the kill chain at the final step. For organizations running AI tools at scale, ADX Vision extends that protection directly to AI interactions on the endpoint, detecting when sensitive data is being transmitted through any application, approved or otherwise.

Share This Story, Choose Your Platform!

Related Posts

CamoLeak: How GitHub Copilot Became An Exfiltration Channel

CamoLeak (CVE-2025-59145) turned GitHub Copilot into a silent data exfiltration channel via prompt injection and GitHub's own image proxy. CVSS 9.6.

The State of Ransomware: March 2026

BlackFog's state of ransomware March 2026 measures publicly disclosed and non-disclosed attacks globally.

Venom Stealer Turns ClickFix Into a Full Exfiltration Pipeline

BlackFog analyzes Venom Stealer, a new MaaS infostealer that uses ClickFix delivery to launch an automated exfiltration pipeline covering credential theft, wallet cracking, and fund sweeping.

What Enterprises Need To Know About Cyber Governance, Risk And Compliance

Learn all about cyber governance, risk and compliance in 2026 and why this must be a consideration at the highest levels of all organizations.

Navigating Essential Cybersecurity Compliance Standards: What To Know

There are a range of cybersecurity compliance standards firms of all sizes must deal with, including mandatory and voluntary frameworks. Here's what you need to know.

Understanding The Requirements Of Information Security Compliance

Learn precisely what information security compliance entails and the various steps that go into making this effective.