Shadow AI and Governance: Why Traditional Control Is Failing CISOs

There is a growing tension inside most organizations right now, and it is becoming increasingly difficult to ignore.

CISOs are under pressure to establish governance around AI: setting policies, managing risk, and protecting sensitive data. At the same time, the business is moving quickly to embed AI into everyday workflows, driven by the need for speed, efficiency, and competitive advantage.

Those priorities are starting to pull in different directions, driving a steady rise in Shadow AI. In many cases, it’s not emerging despite governance, but because of it.

AI Adoption Has Already Outpaced Governance

AI is no longer something employees are experimenting with on the side. It has quickly become part of how work gets done.

BlackFog’s research shows that 86% of employees are already using AI tools weekly, with nearly half turning to tools that have not been approved by their organization. That level of adoption is difficult to contain, especially when it is tied directly to productivity.

Employees are under pressure to move faster and deliver more, and AI offers a clear way to do that. When governance frameworks introduce friction or limit access, employees find other ways to keep moving, often outside the scope of corporate oversight.

When Governance Pushes AI Out of Sight

Security teams have traditionally relied on control, defining what is allowed and restricting what is not. That model starts to break down when applied to AI.

These tools are widely available, often free, and easy to access from anywhere. Restricting them does not remove them from the environment. It simply moves their use beyond visibility.

Employees turn to personal devices, unmanaged browsers, and external platforms that operate outside the organization’s security controls. In doing so, they remove the visibility that governance depends on.

Shadow AI is not driven by malicious intent. It is a predictable response to friction. When the approved path slows people down, they take a different one.

The Real Exposure Lies in the Data

The real issue isn’t the use of AI tools, it is the data being entered into them. BlackFog’s findings show that 27% of employees have shared employee data and 33% have shared research or datasets. At the same time, many rely on free tools that offer little in the way of enterprise protections.

Once that data leaves the organization’s environment, control becomes largely theoretical. Visibility is limited, and there is little assurance around how that information is handled or retained. This is where the real risk sits, in the silent movement of data rather than the use of AI itself.

The Business Is Not Slowing Down

This is not just a user-level issue. The push toward AI adoption is often coming from leadership. BlackFog’s research shows that 69% of C-suite leaders prioritize speed over security, shaping behavior across the organization.

When speed becomes the priority, security controls can feel like an obstacle rather than an enabler. Governance may exist on paper, but behavior tells a different story.

Agentic AI Is Quietly Raising the Stakes

At the same time, the nature of AI use is beginning to change. We are moving beyond prompt-based tools toward systems that can take action, connect to other platforms, and operate with a level of autonomy. AI is starting to do things, not just suggest them.

AI agents can move data between systems, trigger workflows, and interact across environments without much direct oversight. Data exposure is no longer a single event, but part of a chain of automated activity that can be difficult to trace.

If Shadow AI already creates blind spots, Agentic AI has the potential to widen them significantly.

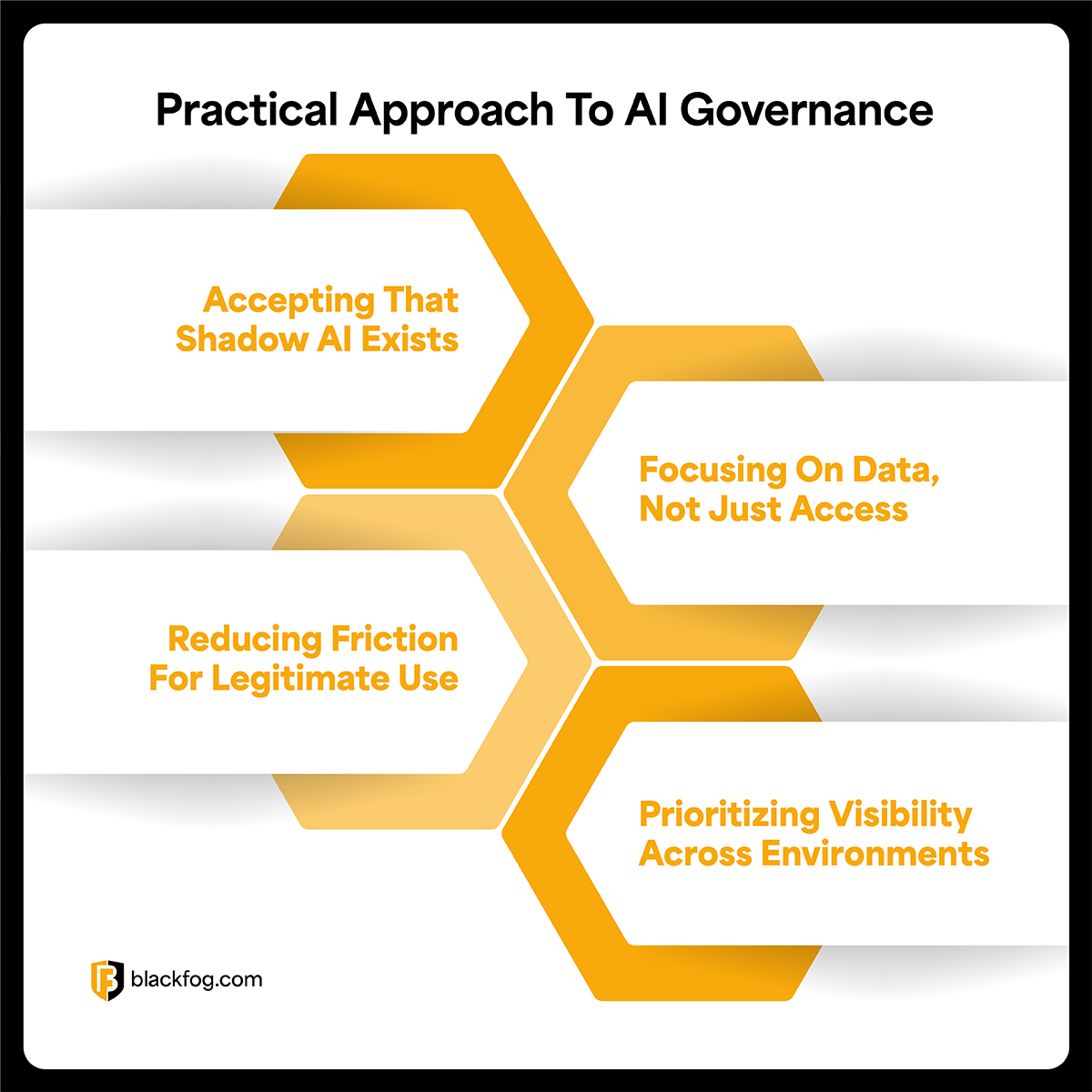

A More Practical Approach to AI Governance

The organizations that are adapting successfully are not stepping away from governance. They are adjusting it to reflect how AI is actually being used. That shift tends to show up in a few consistent ways.

Accepting That Shadow AI Exists

Most organizations have already reached a point where some level of unsanctioned AI usage is unavoidable. Treating it as something that can be fully eliminated often leads to blind spots rather than better control.

Focusing on Data, Not Just Access

The AI ecosystem is expanding too fast, trying to control every tool quickly becomes unsustainable. What remains consistent is the data. Understanding how sensitive information moves and ensuring it does not leave without oversight provides a more effective control point.

Reducing Friction for Legitimate Use

When approved solutions are difficult to use, employees will continue to look for alternatives. Making secure options more accessible, such as licensed versions of AI products reduces the incentive to work outside established controls.

Prioritizing Visibility Across Environments

Effective governance depends on understanding what is actually happening. This includes visibility across endpoints, browsers, and integrated systems, where much of this activity now takes place.

Many organizations are now looking for ways to surface AI driven activity and data movement in real-time, rather than relying on policy alone. That visibility makes it easier to identify risk and respond before it escalates.

The Shift from Control to Visibility

Shadow AI reflects a broader shift in how technology is adopted inside the enterprise. AI is now embedded in daily workflows, driven by the need for speed and efficiency. As that continues, and as more autonomous capabilities are introduced, the gap between governance and reality is likely to grow.

For CISOs, the question is no longer how to stop AI usage. The priority must be ensuring that when AI is used, the data moving through it remains visible, controlled, and protected.

BlackFog ADX Vision

Share This Story, Choose Your Platform!

Related Posts

Shadow AI and Governance: Why Traditional Control Is Failing CISOs

Shadow AI and Governance: Why traditional controls are failing CISOs as AI adoption accelerates, increasing risk and reducing visibility.

Oracle Breach: What Happened and Why It Matters

The 2025 Oracle breach exposed millions of records across three separate incidents. Learn how attackers got in, which industries were hit, and how to protect your organization.

What Is An Integrity Data Breach?

Find out what an integrity data breach involves, how it differs from data loss and why it's vital for businesses to be aware of the potential risks.

How Quickly Should A Suspected Data Breach Be Reported?

Data breach reporting deadlines can be tight. Learn when firms must report a suspected data breach, who must be informed and the risks of delay.

What Are The 5 Most Common Sources Of Data Leaks On The Internet?

Understanding how these five common sources of data leaks occur and how they can be prevented is an essential part of a data security strategy.

What Are The Latest Major Data Breaches And What Was Leaked?

These ten major data breaches from 2025 highlight the worldwide scale of cybersecurity incidents in the past year.